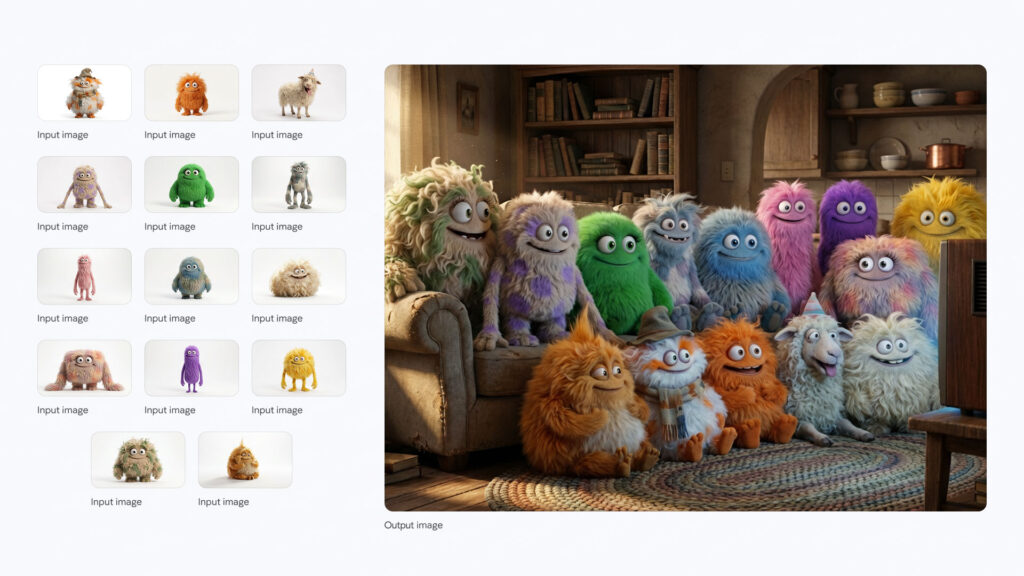

Google’s new Nano Banana Pro model for AI-generated imagery didn’t arrive with flashy announcements, but the impact is significant. It pushes image AI into a space that can genuinely compete with professional visual production, not just filter-based content or stylised mockups.

It marks a shift from AI as a “quick creative trick” to AI as a commercial-grade visual engine.

What’s different about Nano Banana Pro

AI-generated imagery has typically suffered from one or more of the following: plastic-looking surfaces, resolution limitations, visual inconsistencies, incorrect lighting, and messy text or fine-structure rendering. This version addresses those core weaknesses.

Key improvements:

- Native 2K generation with 4K upscale

This isn’t enhanced noise. It rebuilds texture and lighting with visual accuracy close to professional camera output. - Realistic imperfections

Rather than producing overly polished images, it simulates natural lens behaviour, subtle distortions, grain, and dynamic exposure. Images feel captured, not composed by an algorithm. - Much improved rendering of text and numbers

This solves a long-standing limitation, opening up practical uses for posters, packaging, digital ads, and UI prototypes without extra post-editing. According to Google, Gemini 3 Pro Image with Nano Banana Pro is designed to generate sharp, legible text inside images. It is not just good at aesthetics.

It is tuned for posters, intricate diagrams and detailed product mockups, where fonts, spacing and letter shapes actually matter. You can describe the typeface you want, or even ask it to imitate styles like condensed display fonts or casual handwriting, and it will render them directly in the image. - Optimised for visual mood, not surface clarity

The system is tuned for aesthetic impact rather than sterile sharpness. That marks a philosophical turning point in AI’s visual direction. - Watermarking using Google’s SynthID

Adds embedded traceability, which could become increasingly important as AI-generated visuals enter commercial and regulatory environments.

Annotated Diagram Capability

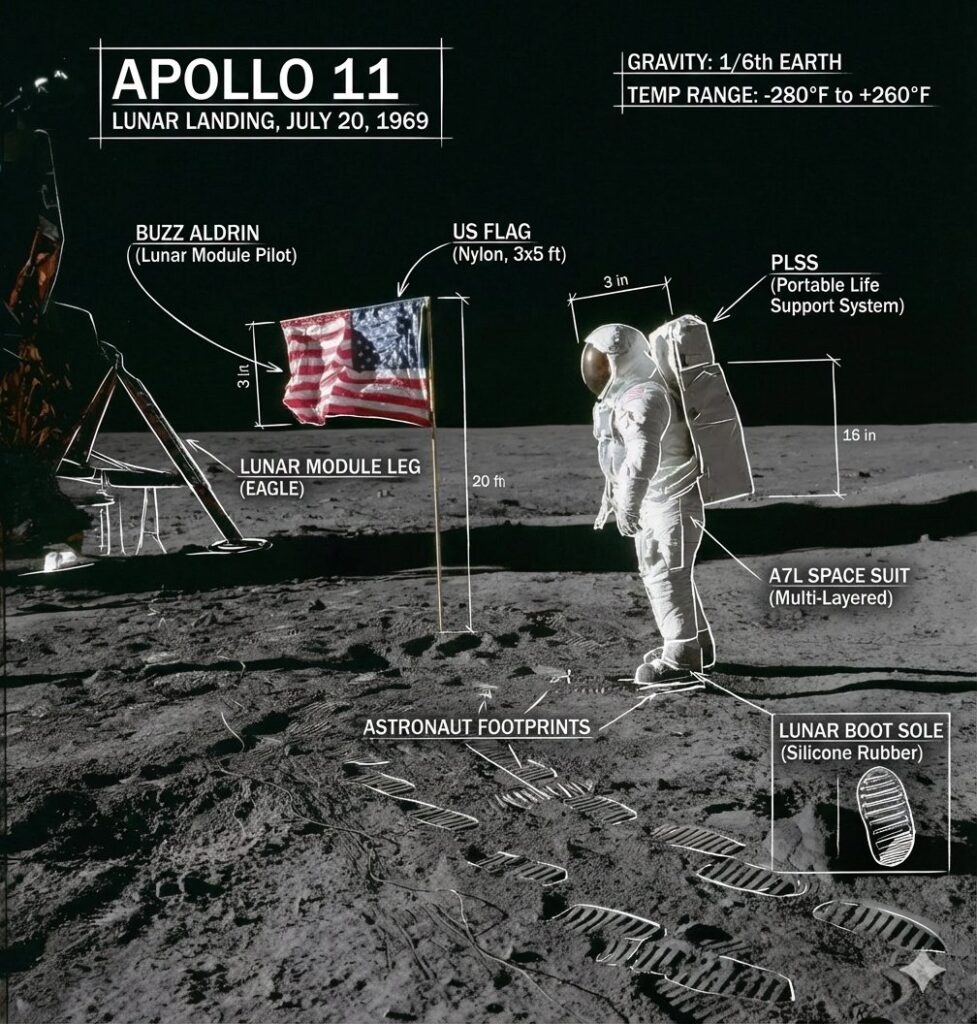

Early testers have demonstrated that Nano Banana Pro can generate surprisingly accurate mock interface designs, technical layout sketches and annotated visuals simply through prompting.

Creators on X have shown examples where they describe diagrams like:

A UI layout concept of a mobile app with arrows and text labels, flat design style

The system not only generates the layout but also places diagram arrows, labels, and reference text cleanly, without the typical image-model distortion. This ability to blend visual aesthetics with functional annotation suggests potential for rapid prototyping, interface planning, and even educational content.

Google also frames Nano Banana Pro as an image model that leverages real-world knowledge and reasoning, not just style transfer. In the official examples, it turns structured information into educational visuals. It can annotate pictures, create infographics from data, and convert handwritten notes into clean diagrams.

This is the same family of capability people are showing online with annotated moon landings and rocket diagrams, where the model overlays arrows, labels and callouts on top of a coherent scene in one shot.

It is still experimental, but it marks a shift toward instruction-friendly image generation rather than just aesthetic rendering.

Why these matters

Nano Banana Pro is the first model to realistically support design-level image creation without requiring professional hardware or large team.

It has implications for:

- Solo creators and small agencies

Significant reduction in the cost barrier for high-quality visuals. - Marketers and advertisers

Faster asset creation, fewer dependencies on outsourced designers. - Product developers

Early-phase visualisation and branding mockups now take minutes instead of days. - Content platforms

Expect a surge in aesthetic-first visual content as quality and speed improve.

Most importantly, it suggests a near future in which the bottleneck is not technical capacity but taste, direction, and concept clarity.

| Industry | How Nano Banana Pro can be used |

|---|---|

| Advertising | Digital campaigns, poster mockups, visual variations |

| Content creation | Social visuals that mimic pro photography styles |

| Product design | Concept styling, packaging ideas |

| Fashion & branding | Editorial-style scenes and fabric rendering |

| Film & animation | Pre-visualisation and layout concepts |

Risks and challenges

- Visual saturation

When everyone can generate high-quality imagery quickly, differentiation becomes harder. - Authenticity concerns

The more believable the visuals, the easier it is for misinformation to spread. Watermarking may become standard. - Model bias

Outputs reflect the training dataset. Without proper prompt direction, visuals may lack cultural variation or originality. - Technical requirements

High-end performance still depends on strong GPU access and stable infrastructure.

Localisation and text inside images

Another official focus is localisation. Nano Banana Pro can translate text inside images and regenerate the visual in another language while keeping layout, branding and composition intact.

The examples include product cans with English labels that are converted into Korean, and ad concepts that are localized from London to Japan or Mexico while preserving the core design.

This makes it useful for teams that need to see how a product, poster or billboard would look in multiple markets without rebuilding everything by hand.

How to approach this shift

Rather than worrying about AI replacing designers, it’s more accurate to expect that designers who use AI effectively will work faster and command higher value. The role shifts from execution to creative direction and curation.

Suggested creative approach

- Start using AI for concept development rather than final production.

- Establish personal or brand visual style guides to maintain consistency.

- Combine AI-generated outputs with light manual edits to preserve authenticity.

- Learn how to prompt with reference-based direction, not merely descriptive language.

Sample prompt frameworks to test

Plain descriptions don’t suffice. Use camera language and visual intention :

Ultra realistic portrait lighting, shot on high-end mirrorless camera, natural imperfections, soft background blur, warm ambient light, 2K output

Product concept render, commercial studio setting, realistic texture, controlled lighting shadows, minimal aesthetic, 4K upscale

Cinematic environment visual, overcast daylight, lens distortion, natural color science, slight texture grain, realistic depth of field

How to Try Nano Banana Pro

Nano Banana Pro is part of Gemini 3 Pro Image, so it is not a separate consumer app. Google exposes it through two main entry points:

• Gemini app and web

On the official page you can click “Try in Gemini” and open a chat-style interface where image generation is one of the tools. You describe what you want, add any aspect ratio or style requirements, and Gemini routes the request through Nano Banana Pro under the hood. Google DeepMind

• Google AI Studio

For builders and more technical users, the “Try in Google AI Studio” button opens a playground where you can work with Gemini 3 Pro Image directly, test prompts, inspect parameters and then export code snippets to call the model via API in your own apps. Google DeepMind

For teams already using Google Cloud, the same underlying model is reachable through the Gemini APIs and Vertex AI, but the fastest way for most people to experiment is simply through Gemini and AI Studio without touching infrastructure.

At this stage, the model is positioned for professional use rather than open experimentation so that access might require approval or business verification.

Final thought

Nano Banana Pro doesn’t just enhance AI imagery. It closes the gap between amateur and professional visual production. The next level of competition is not about who can generate, but who can imagine and direct with precision.

When high production quality becomes accessible, creative vision becomes the real advantage.

Expect more models to follow this trajectory. The visual AI race is pivoting from novelty to production-ready capability.